Introducing LTM-1

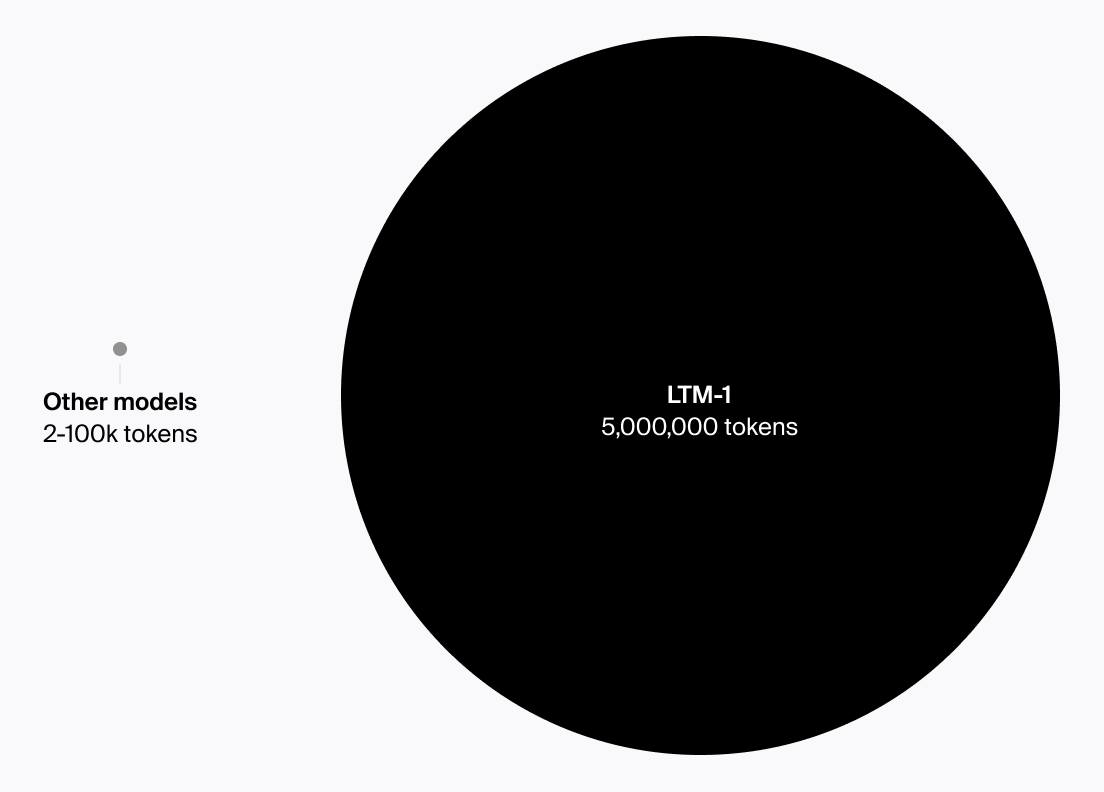

Magic's LTM-1 model has a 5,000,000 token context window, allowing it to see your entire repository of code.

Magic Team, on June 6, 2023

Magic has trained a Large Language Model (LLM) that’s able to take in the gigantic amounts of context when generating suggestions. For our coding assistant, this means Magic can now see your entire repository of code.

Towards trustworthy, grounded AI

Larger context windows can allow AI models to reference more explicit, factual information and their own action history. We hope to be able to utilise this research to improve reliability and coherence.